This started as a post on X but has gotten so long I’d thought I’d put it here as well.

DeepSeek AI (Beijing/Hangzhou) reveals its innovative and cost-performance leading AI-HPC architecture.

Fire-Flyer AI-HPC: A Cost-Effective Software-Hardware Co-Design for Deep Learning

https://arxiv.org/pdf/2408.14158

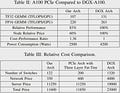

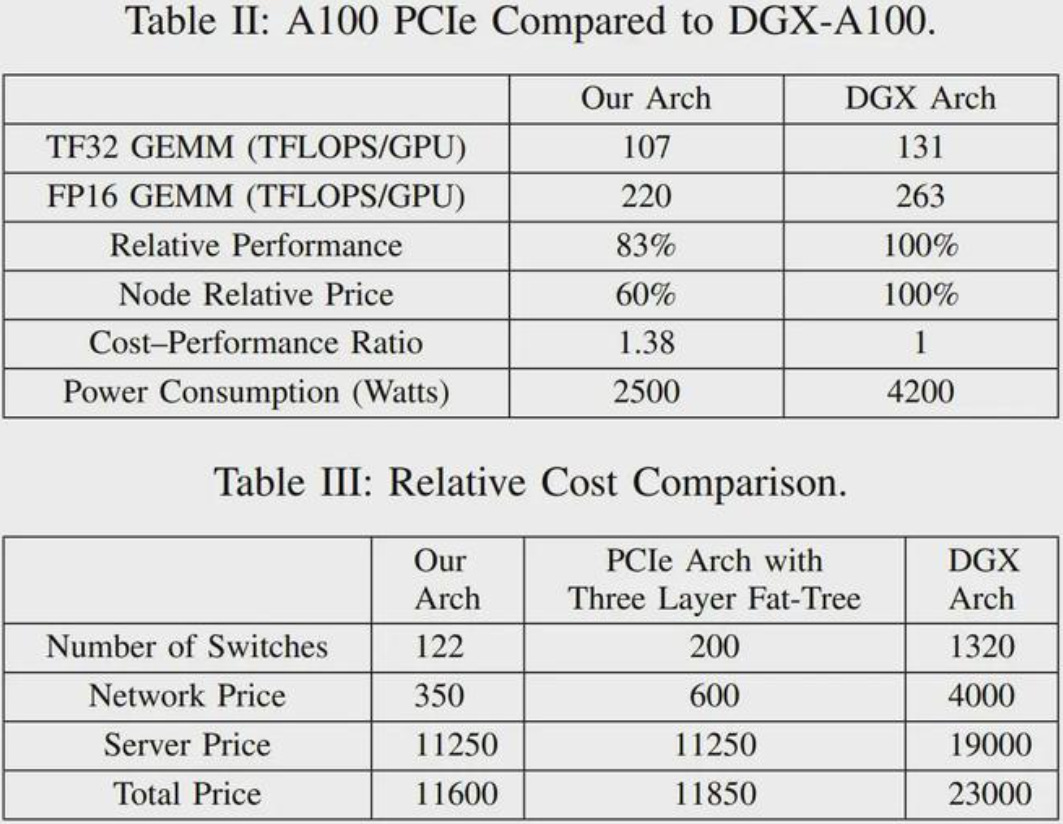

Their architecture has better cost-performance ($$$, energy) than Nvidia's DGX platform.

DeepSeek are both technically impressive and amazingly open: their best models are open source, and here they give all the details of hard-won architecture innovations (which include lots of software optimization).

Impressive raw g applied to AI infrastructure, from a relatively small team. Their model architecture (algo) innovation reported earlier in the year was also considered groundbreaking:

https://arxiv.org/abs/2405.04434

"DeepSeek-V2 adopts innovative architectures including Multi-head Latent Attention (MLA) and DeepSeekMoE. MLA guarantees efficient inference through significantly compressing the Key-Value (KV) cache into a latent vector, while DeepSeekMoE enables training strong models at an economical cost through sparse computation."

This is an earlier post I made about the company:

https://x.com/hsu_steve/status/1815138049463906376

Note almost all of its talent was trained in China - it’s a good indication of the highest level capability available to PRC companies because of ongoing human capital deepening. See discussion in this Manifold episode:

A short comment in the paper re: HW vs Nvidia AI chips. If the HW-Ascend software ecosystem matures (lots of talent for this in PRC), and they solve (with SMIC) 7/5nm production issues, they will give Nvidia a run for its money... Note though that the DeepSeek architecture described in the paper is built using Nvidia A100s.

"Huawei has designed the Ascend AI DSA chip [55], [56], which remains competitive with NVIDIA, as noted by NVIDIA CEO Jensen Huang. These accelerators are tailored for efficient execution of AI workloads, offering specialized features to optimize model training and inference. However, their software ecosystems, while progressing, still require further development to match the maturity of NVIDIA’s offerings."

This is a good article on the new paper from Sina (translated).

Nice strategic overview. Can you please share your recent interview on youtube, on spotify it is inaccessible for me, and i believe for many other also.

Does Chinese scientists in CS+AI have similar views on AI future as of those in silicon valley ?